Reinforcement Learning – FrozenLake AI Agent

A comparison study of tabular Reinforcement Learning algorithms implemented on OpenAI Gym environments.

Benchmarks Q-Learning against classic planning baselines (value/policy iteration) with convergence and reward analysis.

Project Details

Technologies

The Problem

Understanding the convergence properties of different RL algorithms is fundamental to AI research. The challenge was to demonstrate the efficiency gap between value-based and policy-based approaches in stochastic environments.

The Solution

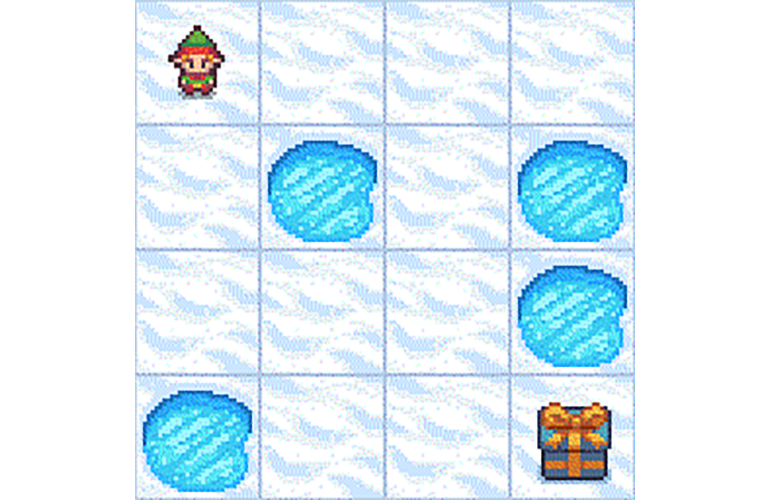

Implemented and compared Q-Learning, Value Iteration, and Policy Iteration on the FrozenLake-v1 environment. I visualized the Q-table heatmaps and reward convergence graphs to analyze how the agent handles "slippery" state transitions.

Architecture & Implementation

- Environment: OpenAI Gym (Gymnasium).

- Algorithms: Manual implementation of Bellman equations and temporal difference learning.

- Visualization: Matplotlib for plotting reward curves and state-value heatmaps.

Results & Impact

The Value Iteration approach achieved 100% success on the non-slippery map, while the Q-learning agent demonstrated robust adaptation to the stochastic transition model of the slippery variant.